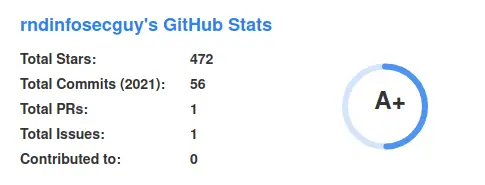

Scavenger – OSINT Bot – REWORKED

Bot In Action

Intro

Just the code of my OSINT bot searching for sensitive data leaks on paste sites.

Search terms:

- credentials

- private RSA keys

- WordPress configuration files

- MySQL connect strings

- onion links

- SQL dumps

- API keys

- complete emails

Search terms can be customized. You can learn more about it in the configuration section.

Articles About Scavenger

https://jakecreps.com/2019/05/08/osint-collection-tools-for-pastebin/ https://jakecreps.com/2019/01/08/scavenger/ https://youtu.be/VCwiZ2dh17Q?t=51 (the bot is mentioned here)

Main Features

For pastebin.com the bot has two modes:

- looking for sensitive data in the archive via scraping

- looking for sensitive data by tracking users who publish leaks

Additional features:

- customizable search terms

- scan folders with text files for sensitive information

Configuration

- Delete the README.md files in every subfolder as they are only placeholders

- The bot searches for email:password combinations and other kinds sensitive data by default. If you want to add more search terms edit the configs/searchterms.txt file or use the -3 switch in the control script Default configs/searchterms.txt configuration:

mysqli_connect( BEGIN RSA PRIVATE KEY The name of the database for WordPress apiKey: Return-Path: insert into INSERT INTO .onion

If you want to add other search terms just add them to file line by line. You know a useful search terms which is missing here? Tell me! 🙂 3. For the user tracking module of pastebin.com you need to add the target users line by line to the configs/users.txt file.

Usage

Program help:

$ python3 scavenger.py -h

/ / _ _ __

_____ _/ \__ \ \/ // _ \ / \ / _/ _ _ _ \ / \ __ / _ \ /\ /| | \/ // > /| | \/

/ /__ >_ /_/ ___ >| /___ / ___ >| \/ \/ \/ \/ \//_/ \/ Reworked

usage: scavenger.py [-h] [-0] [-1] [-2] [-3] [-4]

control script

optional arguments:

-h, --help show this help message and exit

-0, --pbincom Activate pastebin.com archive scraping module

-1, --pbincomTrack Activate pastebin.com user tracking module

-2, --sensitivedata Search a specific folder for sensitive data. This might

be useful if you want to analyze some pastes which

were not collected by the bot.

-3, --editsearch Edit search terms file for additional search terms

(email:password combinations will always be searched)

-4, --editusers Edit user file of the pastebin.com user track module

example usage: python3 scavenger.py -0 -1

Crawled pastes are stored at different locations depending on their status.

- Paste crawled but nothing was detected -> data/raw_pastes

- Paste crawled and an email:password combination was detected -> data/raw_pastes and data/files_with_passwords

- Paste crawled and other sensitive data was detected -> data/raw_pastes and data/otherSensitivePastes

Pastes get stored in data/raw_pastes until they reach a limit of 48000 files. Once there are more then 48000 pastes they get ziped and moved to the archive folder.

Start the pastebin.com archive scraping module

$ python3 scavenger.py -0

Start pastebin.com user tracking module

$ python3 scavenger.py -1

When starting one of these modules, a tmux session with the running module is created in the background.

List tmux sessions

$ tmux ls

pastebincomArchive: 1 windows (created Sun Apr 14 06:33:32 2021) [204x58]

pastebincomTrack: 1 windows (created Sun Apr 14 06:33:32 2021) [204x58]

Interact with a tmux session example

$ tmux a -t pastebincomArchive

$ tmux a -t pastebincomTrack

To detach from a session hit STRG+b d.

If you want to start a module without using the control software you can do this by calling them directly.

Pastebin.com archive scraper

$ python3 pbincomArchiveScrape.py

Pastebin.com user tracker

$ python3 pbincomTrackUser.py

Search specific folder for sensitive data:

$ python3 findSensitiveData.py TARGET_FOLDER

To Do

If you miss anything and want me to add features or make changes, just let me know via Twitter or GitHub issue 🙂

Leave a Reply